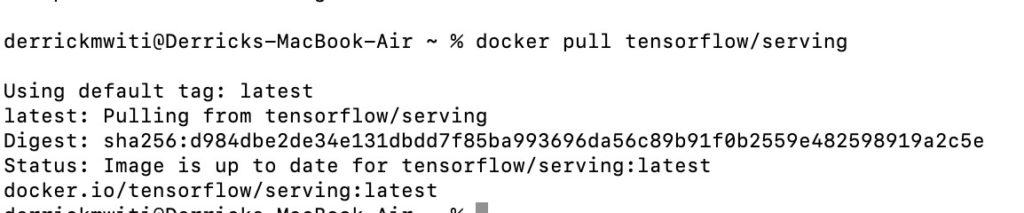

Tensorflow Serving by creating and using Docker images | by Prathamesh Sarang | Becoming Human: Artificial Intelligence Magazine

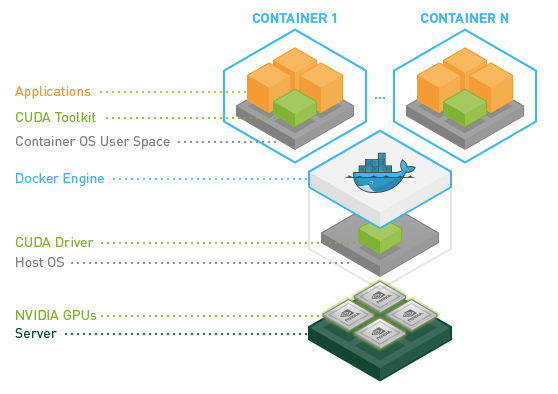

GitHub - EsmeYi/tensorflow-serving-gpu: Serve a pre-trained model (Mask-RCNN, Faster-RCNN, SSD) on Tensorflow:Serving.

Deploy your machine learning models with tensorflow serving and kubernetes | by François Paupier | Towards Data Science

Kubeflow Serving: Serve your TensorFlow ML models with CPU and GPU using Kubeflow on Kubernetes | by Ferdous Shourove | intelligentmachines | Medium

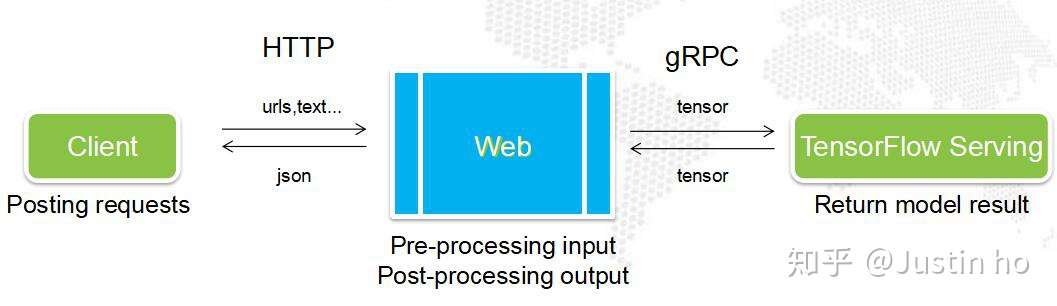

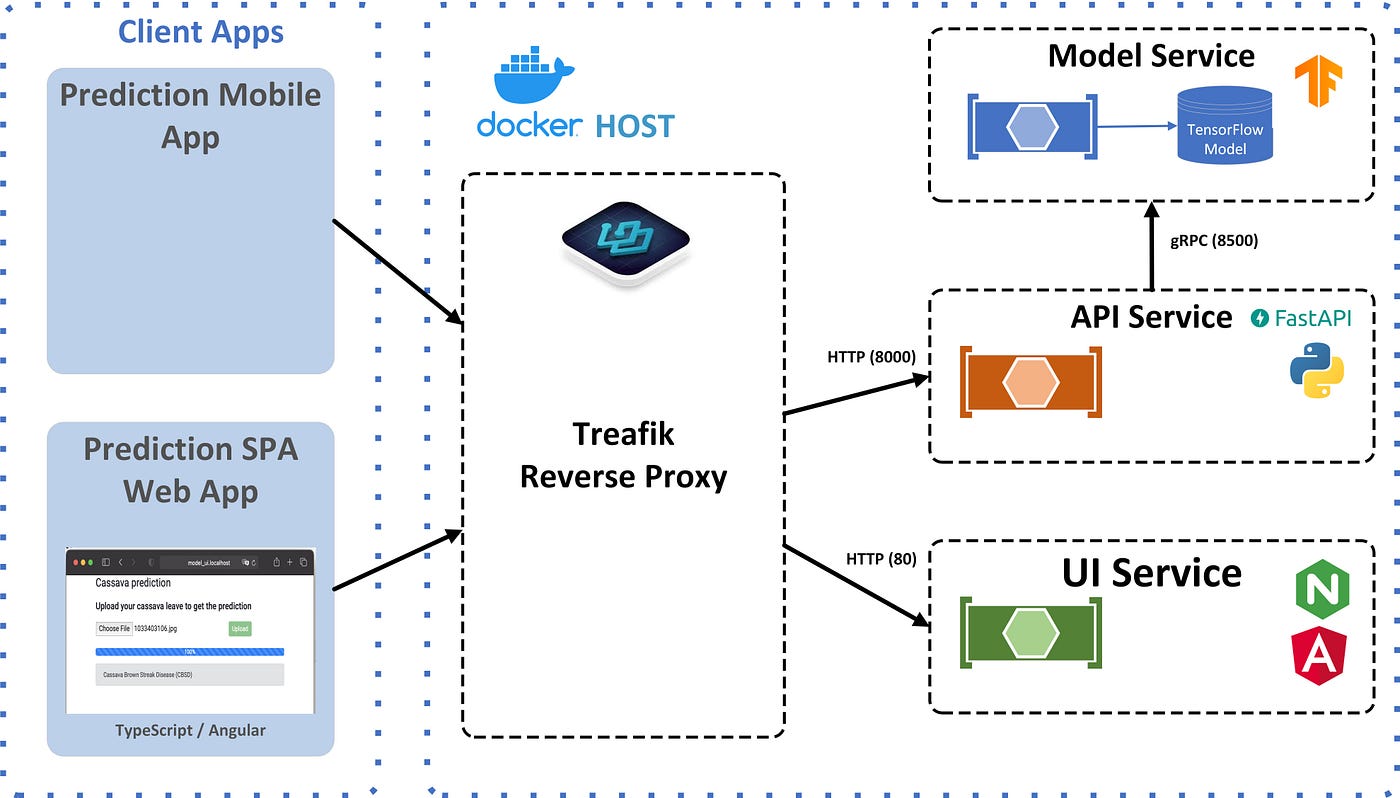

Reduce computer vision inference latency using gRPC with TensorFlow serving on Amazon SageMaker | AWS Machine Learning Blog

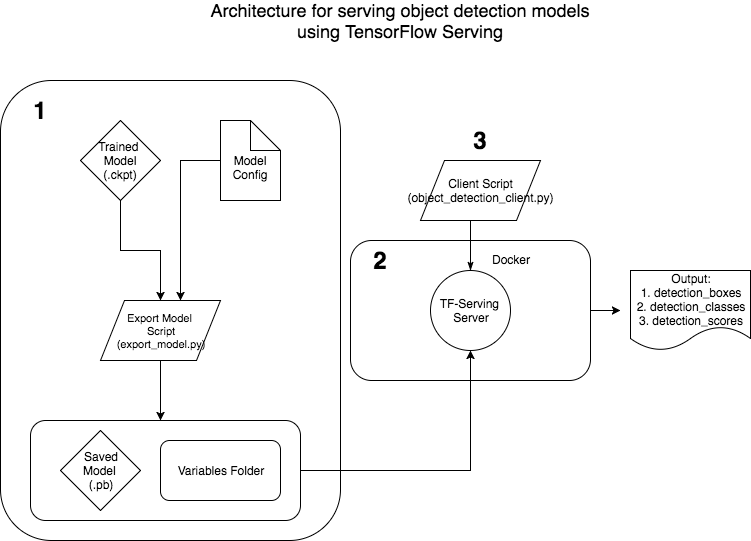

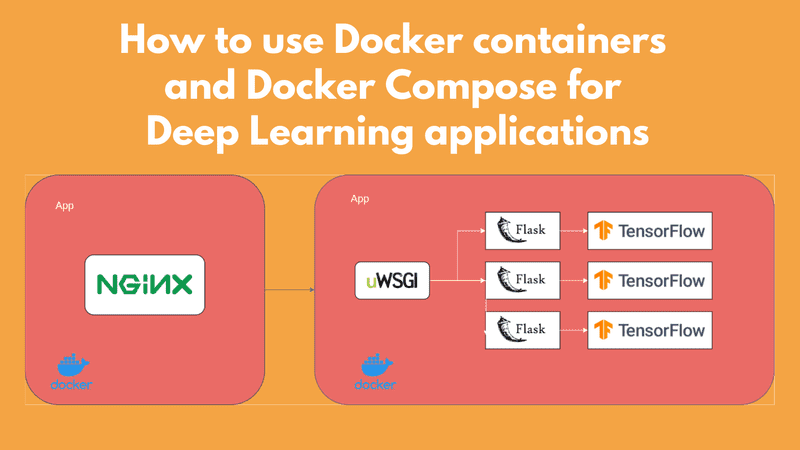

How To Deploy Your TensorFlow Model in a Production Environment | by Patrick Kalkman | Better Programming

.png)